Introduction

Several years ago, we greatly enjoyed following Doug Bagley's Great Language Shootout, but as he has for the moment discontinued the project, we decided to create our own language performance testing framework and manage it in as an open source project.

Our goals at this point are to provide a comparison of a variety of Free/Open Source programming languages as well as a cross-platform, extensible framework to run the tests with.

Not Just About Speed

Speed is just one way to judge a programming language, but there are other criteria that are often just as, if not more important. For example - how readable is the code? Will another programmer be able to pick it up and understand it? Modify it? How much existing code is there in the language for you to draw from? How good are the documentation and the resources to go to when you need help? We can't program a comparison of these, so we recommend that you look through the implementations of the tests for each language to get a feel for each one, and check out the resources for it listed at the URL.

Salvatore Sanfilippo and David N. Welton, December 2003

Results

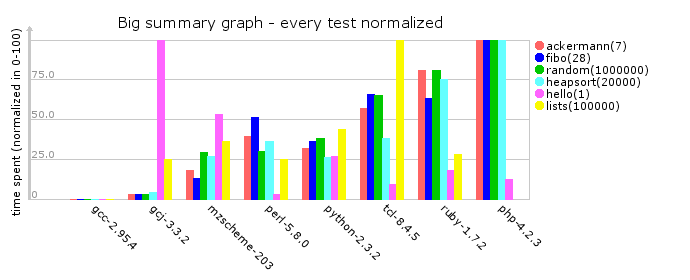

For the impatient, the following are summary graphs. The first shows raw data in milliseconds spent to execute the test, the second results are normalized in the range 0-100 for every test. You can click in the test names to see the test description and more detailed graphs for every test.

This table shows which tests have been implemented for which languages, with red cells indicating a missing test:

| gcc | gcj | mzscheme | perl | php | python | ruby | tcl | |

|---|---|---|---|---|---|---|---|---|

| ackermann | ||||||||

| fibo | ||||||||

| heapsort | ||||||||

| hello | ||||||||

| lists | ||||||||

| random |

Last updated: Sun Jan 18 11:15:31 CET 2004